What Is AI Context — And Why Does It Matter More Than Data?

If you've been reading about enterprise AI deployments, you've probably encountered a frustrating pattern: organizations invest heavily in AI, run promising pilots, and then struggle to scale anything meaningful into production.

The most common explanation you'll hear is that the data isn't good enough, or there isn't enough of it. So companies invest in bigger data lakes, more integrations, better pipelines.

And then the same problems persist.

Let us explain what AI context actually means, why it's distinct from raw data, and why getting this distinction right is one of the most important architectural decisions an organization can make.

The Difference Between Data and Context

These two terms are often used interchangeably, but they describe fundamentally different things.

Data is raw information: records, events, measurements, transactions. A customer ID. A machine sensor reading. A sales figure. A shipment status.

Context is the layer of meaning that explains what that information means — how it connects to other things, why it matters, and what should happen as a result.

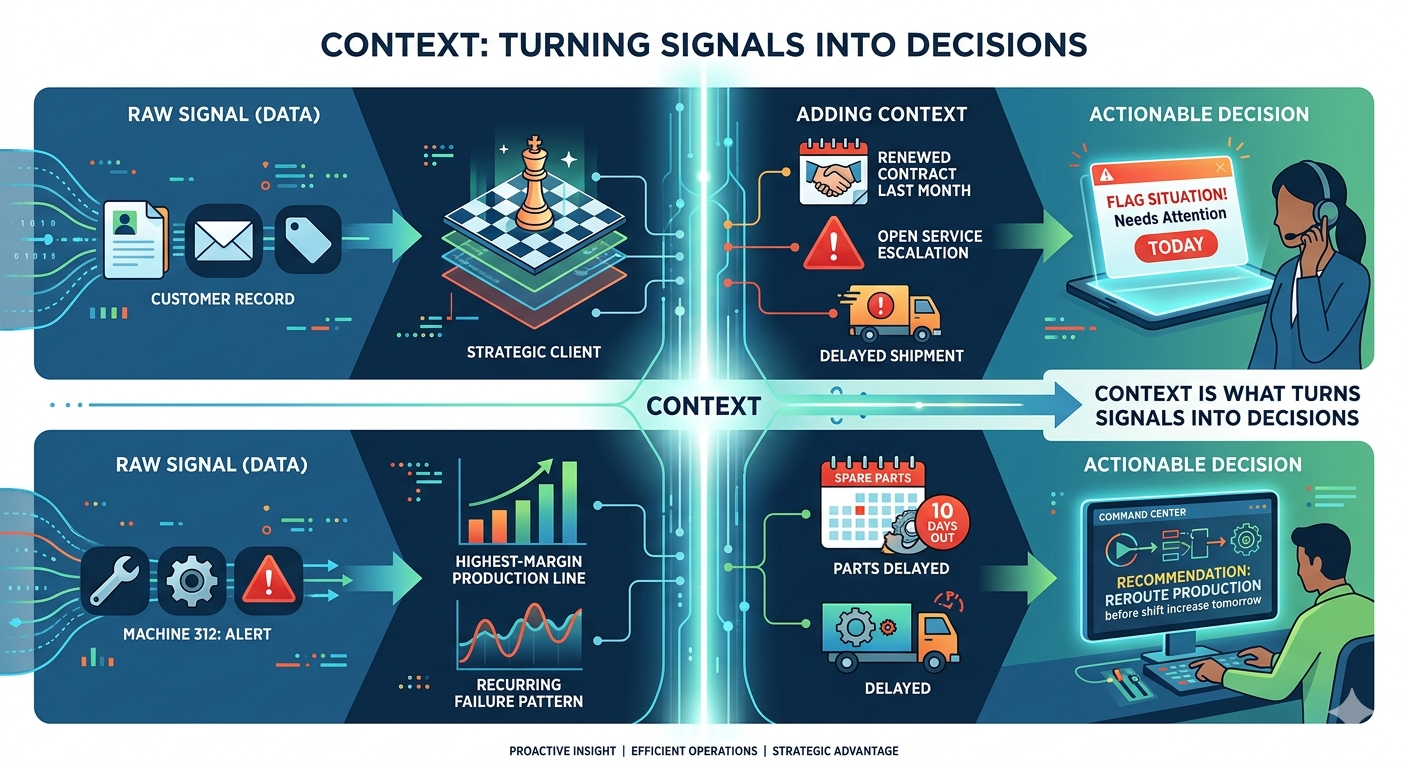

Here's a concrete illustration:

A customer record is data.

Knowing that customer is a strategic account, renewed three weeks ago, currently has an unresolved service escalation, and is tied to a shipment delayed by four days — that's context.

A machine alert is data.

Knowing that machine is on your highest-margin production line, has shown a recurring failure pattern over the last six months, and has no spare parts available for ten days — that's context.

The difference isn't trivial. An AI system with only the first piece of each pair will produce a generic response. An AI system with both pieces can tell you something operationally useful — and more importantly, something you can act on.

Why More Data Doesn't Automatically Fix This

The intuitive assumption is that AI quality improves with data volume. And to some extent, that's true for training large foundation models.

But in enterprise deployments, the problem is rarely that the model hasn't seen enough data. It's that the model doesn't understand the relationships between the data it has.

Consider a scenario: your AI system detects declining sales in a region and recommends increasing marketing spend. On the surface, that's a reasonable suggestion. But if the real cause is inventory stockouts driven by a logistics disruption — not weak demand — then more marketing doesn't help. It drives demand you can't fulfill.

The model had access to the sales data. What it lacked was the connection between sales performance, inventory levels, logistics events, and supplier status. That connective layer is context. And without it, even a sophisticated model produces confidently wrong recommendations.

This is why data volume is a necessary but insufficient condition for effective enterprise AI.

What AI Context Actually Consists Of

For enterprise AI to perform reliably, it needs to understand several categories of operational meaning:

Entity relationships — How things connect to each other. Which customers are tied to which contracts. Which machines depend on which components. Which orders are linked to which suppliers.

Business rules and policies — What's allowed, what's restricted, what thresholds matter. Which regions operate under different pricing rules. Which incidents require escalation vs. monitoring.

Operational priorities — What matters most, and why. Which production line is highest-margin. Which customer has a critical service commitment. Which system failure cascades into others.

Historical behavior — What's normal vs. abnormal for a given entity or process. What failure patterns have appeared before. What seasonal or cyclical trends apply.

Current state — What's changed recently. What's actively in progress. What's pending human action.

When an AI system can answer questions with reference to all of these layers, it stops being a search tool and starts being something closer to operational intelligence.

Why This Particularly Matters for Generative AI

Generative AI adds another dimension to the context problem.

Large language models are fluent. They can summarize, draft, and converse in ways that feel remarkably capable. In many consumer applications, that fluency is most of what you need.

In enterprise settings, fluency isn't enough. When an employee asks an AI assistant which version of a contract is currently valid, or which inventory number is accurate as of today, or which incident requires immediate escalation — they need answers grounded in operational reality, not answers that are merely well-phrased.

The failure mode here is subtle and damaging: generative AI with weak enterprise context produces responses that sound authoritative and turn out to be wrong. After employees encounter this a few times, they stop using the tool. The investment gets written off. And the organization concludes that AI "isn't ready" — when the real issue was that the architecture wasn't ready.

Where the Current Infrastructure Falls Short

Most organizations have already invested significantly in data infrastructure: warehouses, data lakes, BI platforms, dashboards, reporting tools. These are valuable and they're not going away.

But they were designed for storage and reporting — not for creating a live model of how the business operates. The questions they answer well are historical: what happened last quarter, what's the current inventory count, what's the trend over time.

The questions that matter for AI are relational and operational: what does this event mean in relation to everything else, who needs to know, what should happen next.

The gap between what current data infrastructure provides and what AI actually needs is where most enterprise AI initiatives quietly stall.

What an AI-Ready Context Layer Looks Like

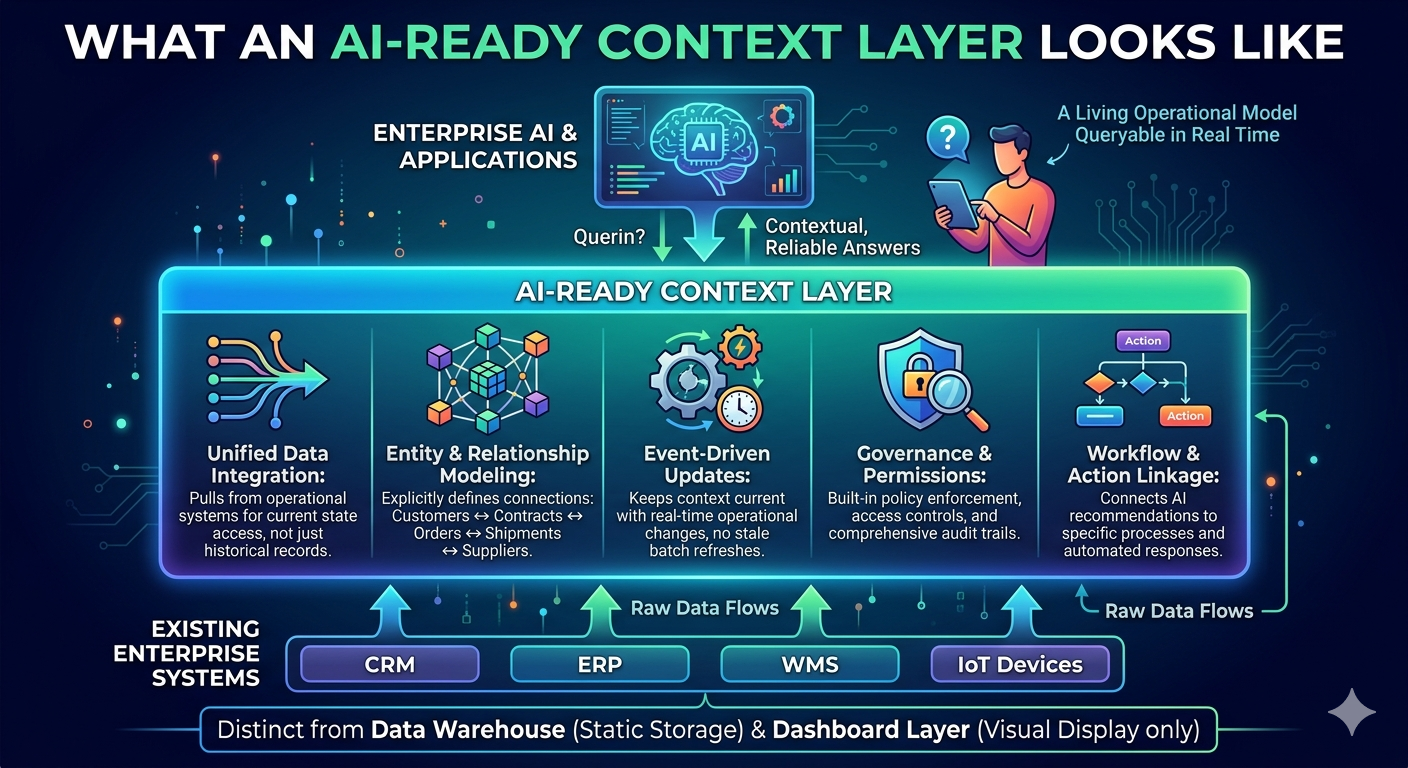

Building context for enterprise AI means creating a connected layer that sits across your existing systems and makes the relationships explicit. Practically, this involves:

Unified data integration — Pulling from operational systems, not just analytical stores, so the AI has access to current state, not just historical records.

Entity and relationship modeling — Making explicit how business objects connect: customers to contracts to orders to shipments to suppliers.

Event-driven updates — Keeping context current as operations change, rather than relying on batch refreshes that are already stale.

Governance and permissions — Ensuring AI operates within appropriate boundaries, with audit trails and policy enforcement built in.

Workflow and action linkage — Connecting AI recommendations to actual processes, so insight can translate into response.

This is architecturally distinct from a data warehouse or a dashboard layer. It's closer to a living operational model of the business that AI can query in real time.

How This Works in Practice: Industry Examples

Manufacturing A sensor reports abnormal vibration. With context, the AI knows this machine is on a bottleneck line, spare parts are unavailable for ten days, a shift increase starts tomorrow, and rerouting production to another line is feasible. Instead of a generic maintenance alert, it produces a prioritized recommendation.

Logistics A port delay is detected. With context, the AI knows which downstream orders are affected, which customers have contractual SLA exposure, and which alternative routing options exist at current capacity and cost. Response planning starts immediately rather than after a manual investigation.

Smart Cities / Public Sector A traffic incident is reported. With context, it connects to nearby congestion, bus route delays, emergency vehicle access requirements, active roadworks, and which districts need citizen alerts. Coordination happens faster because the relationships are already mapped.

The Organizational Implication

The shift this requires isn't primarily a technology purchase. It's an architectural rethinking.

Organizations that treat AI context as a layer to be built — with the same seriousness they've applied to data storage and analytics — tend to get deployments that scale. Organizations that buy AI tools and assume the context will figure itself out tend to get pilots that don't.

The practical question isn't "which AI model should we use?" It's "does our data environment give any AI model what it actually needs to be useful here?"

What to Look for in a Platform

If you're evaluating infrastructure to support enterprise AI, the key capabilities to look for are:

Integration across operational systems (not just analytical ones)

Entity and relationship modeling across business domains

Real-time or near-real-time state updates

Governance, auditability, and permission controls

Native connectors for AI services and agents

Cross-functional visibility, not just departmental data siloes

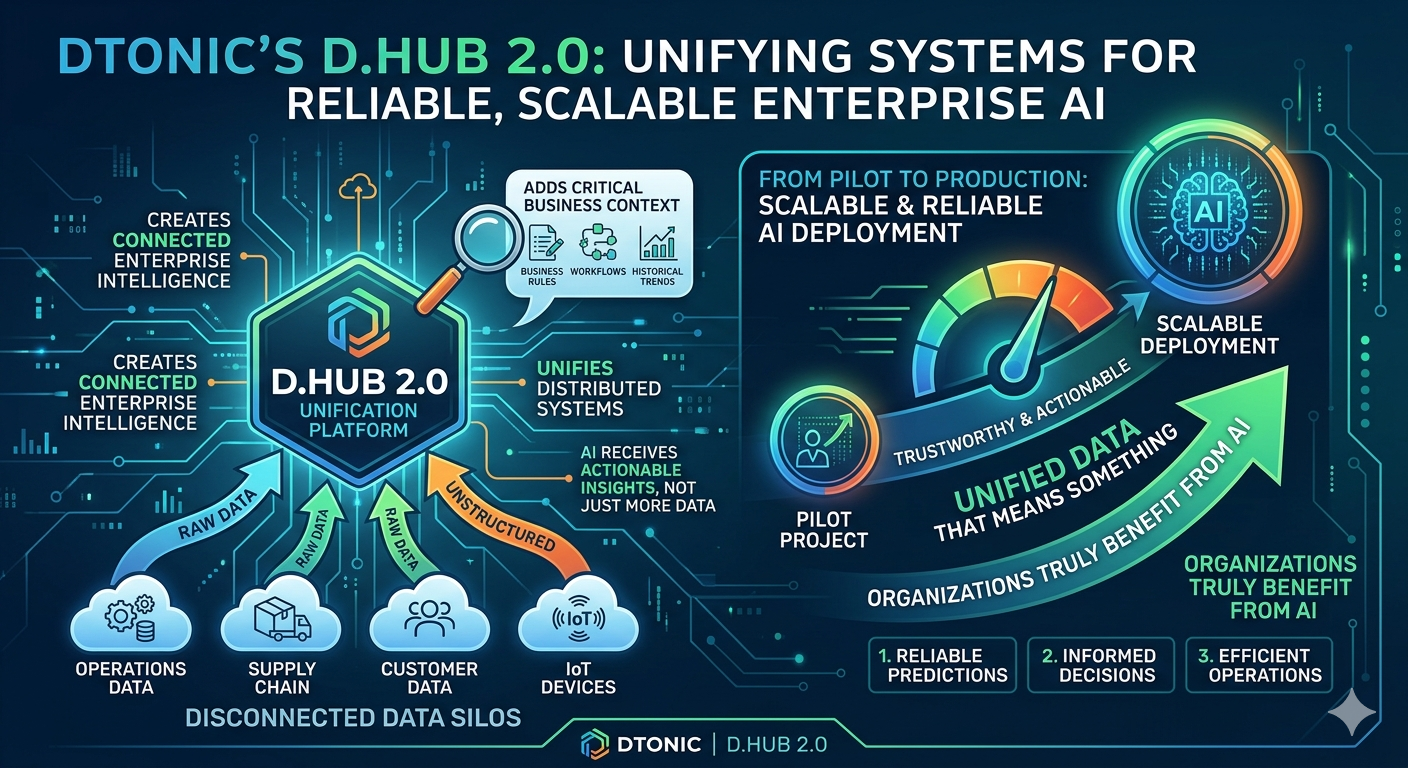

Platforms like Dtonic's D.Hub 2.0 are designed around exactly this premise — providing the connected enterprise intelligence layer that makes AI deployments reliable enough to move beyond pilot stage and into operational use across complex industries.

Summary

Data tells AI what exists. Context tells AI what it means, how things connect, and what action makes sense.

More data volume doesn't solve the context problem — it often makes it harder to see.

Most enterprise AI failures are architecture failures, not model failures.

Generative AI is especially vulnerable to weak context because fluent-sounding wrong answers are hard to catch until trust is already damaged.

Building an AI-ready context layer requires rethinking how data infrastructure connects operational systems, entities, relationships, and workflows — not just how it stores records.

The organizations that gain durable advantage from AI will be those whose data is structured, connected, and operationally meaningful — not just large.