Why Your AI Agents Don't Understand Your Business — And What to Do About It

Most enterprise AI projects don't fail because the models are bad. They fail because the AI has no idea how your business actually works.

There is a question that rarely gets asked clearly in enterprise AI conversations, but it sits underneath almost every failed pilot and every agent that behaves unexpectedly:

What does your AI actually know about your business?

Not data. Not documents. Not dashboards. But the actual operating logic of your organization — who your customers are, how your warehouses relate to your plants, which shipments are at risk, what a delay actually means, and what someone is authorized to do about it.

For most organizations, the honest answer is: very little.

And that gap — between the data AI can access and the business context it needs to act on — is the real reason enterprise AI struggles to move from demonstration to reliable operations.

The Problem with Data Alone

Modern enterprises are not short on data. ERP systems, CRM platforms, IoT sensors, logistics trackers, financial systems, edge devices — the data exists, and in large volume.

But data describes what is happening. It does not explain what it means or what should happen next.

Consider a manufacturing business. You have plants. You have warehouses. You have customers. You have inventory moving between all of them across a complex network. The data captures every transaction, every movement, every reading.

But the data alone cannot tell you:

Which warehouse shortage is critical versus routine

Whether a delayed shipment requires escalation or can be absorbed

What the relationship is between a production anomaly and a downstream customer commitment

Who has the authority to reroute product, and under what conditions

These are not data questions. They are business logic questions. And they require a layer that most AI deployments simply do not have.

What an Ontology Actually Is

The term "ontology" sounds abstract, but the underlying idea is straightforward.

An ontology is the nouns and verbs of your business — formally defined in a way that both humans and AI systems can work with. It models not how your software systems need data to be structured, but how your business is actually operating.

In practice, building an operational ontology means capturing three things:

Data— the current state of your business, drawn from every relevant system regardless of where it lives. Legacy platforms, cloud systems, edge devices, third-party feeds. The goal is a unified, connected picture of what is happening right now.

Logic— the rules, models, and thresholds that determine how to think about that data. This might be simple rules (orders above a certain value require approval), predictive models (a forecast for demand at a given location), or optimization engines that determine the best allocation of resources. The logic layer is what gives data its meaning.

Actions— the defined set of things that can actually be done in response. Writing back to an ERP system to move product. Triggering a workflow. Escalating to a human decision-maker. Updating a financial record. The actions layer is what connects insight to real-world consequence.

When these three layers are unified into a coherent model, you have something powerful: a digital twin of your business — not just a visual representation, but a living, reasoned model of how your organization operates, what its current state is, and what responses are available.

Why This Is the Missing Context for AI

Large language models are remarkable tools. But they have a fundamental limitation that is often understated:

They were not trained on your business.

They do not know your customer segmentation logic. They do not know which of your suppliers is considered high-risk. They do not know that a particular product line has a 72-hour lead time and cannot be expedited. They do not know what your approval hierarchy looks like or which regulatory constraints apply to a given transaction.

When you deploy an AI agent without giving it this context, you are essentially asking a highly capable but completely uninformed contractor to run your operations. The results tend to be inconsistent at best and actively harmful at worst.

The ontology solves this. When an AI agent has access not just to data but to the full operational context — the entities, the relationships, the rules, the authorized actions — it can reason meaningfully about your business rather than guessing. The LLM can call a deterministic model. It can evaluate a situation against defined thresholds. It can trigger the right action in the right system without needing a human to manually bridge the gap between insight and response.

This is the difference between an AI that generates answers and an AI that runs operations.

The "Swivel Chair" Problem at Scale

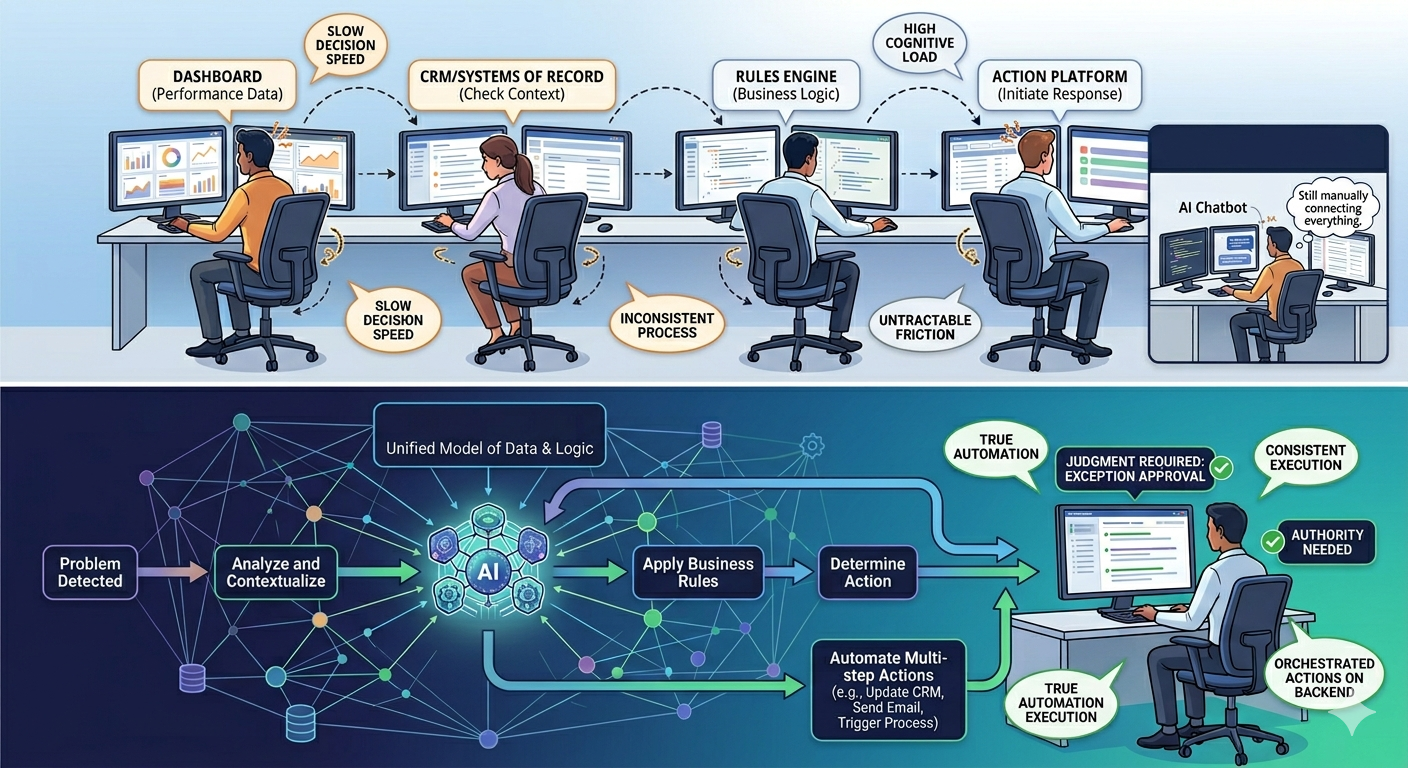

Anyone who has worked in enterprise operations knows the swivel chair: the analyst who reads a dashboard, opens a separate system to check context, switches to another tool to understand the rules, then manually initiates a response in yet another platform.

This friction is so normalized that it is rarely counted as a cost. But it is — in decision speed, in consistency, in the cognitive load placed on the people who keep operations running.

AI agents promise to eliminate the swivel chair. But they can only do so if the entire chain — from data to logic to action — is connected and accessible in one coherent model. Without that, you have not eliminated the swivel chair. You have just added an AI to the rotation.

The ontology is what makes true automation possible: complex, multi-step actions orchestrated on the back end, with humans involved only where judgment or authority is genuinely required.

From Insight to Action: A Different Kind of Platform

The implications of this architecture extend beyond any single use case.

When business logic is embedded in a shared operational layer — rather than scattered across individual applications or locked inside vendor silos — every tool in your ecosystem can draw on the same understanding. A new AI agent, a mobile app for the plant floor, a customer-facing interface, a back-end integration — all of them work from the same definition of how your business operates.

This is not just a technical convenience. It is a strategic asset. Business logic that is formally modeled, versioned, and accessible across your entire platform becomes something you own and build on over time. Business logic that lives only inside a specific application is logic you are renting — and it disappears the moment you move to a different tool.

The question for enterprise leaders is not whether to deploy AI agents. Most organizations have already started. The question is whether those agents are operating on a coherent, accurate model of the business — or whether they are guessing.

The Goal: AI and Humans Working Together on a Shared Model

The most important word in operational AI is not automation. It is coordination.

The goal is not to replace human judgment. It is to give AI agents enough context to handle the high-volume, rules-bound decisions that currently consume enormous human time — and to do so in a way that keeps humans informed, in control, and focused on the decisions that genuinely require them.

That requires a shared model. One that captures the data, the logic, and the actions. One that reflects how the business actually works, not how any particular software system needs it to be structured. One that gets richer and more capable over time as the business evolves.

Organizations that build this foundation are not just deploying AI. They are building the infrastructure that makes AI reliable — the layer that turns capability into operational reality.

Dtonic builds operational intelligence infrastructure for complex, data-rich environments. D.Hub 2.0 is designed to unify fragmented data, embed business logic, and coordinate AI-driven action across enterprise operations — giving your agents the context they need to act with confidence.